Meet DiffMoog: A Differentiable Modular Synthesizer with a Comprehensive Set of Modules Typically Found in Commercial Instruments

Synthesizers have been an integral part of music production, allowing musicians to create a wide range of sounds and explore new sonic territories. Over the years, synthesizers have evolved from large, analog machines to compact, digital instruments. With advancements in technology and machine learning, researchers and developers are constantly pushing the boundaries of what synthesizers can do.

One such groundbreaking development is DiffMoog, a differentiable modular synthesizer with a comprehensive set of modules typically found in commercial instruments. DiffMoog has gained attention for its ability to integrate into neural networks and automate sound matching. In this article, we will explore the features and potential applications of DiffMoog, as well as the challenges it poses and the future possibilities it holds.

The Need for Differentiable Synthesizers

Traditional sound design involves manually adjusting various parameters of a synthesizer to achieve the desired sound. This process requires expertise and can be time-consuming. To simplify this process, researchers have developed differentiable synthesizers that can automatically optimize parameters based on input sounds.

🔥Explore 3500+ AI Tools and 2000+ GPTs at AI Toolhouse

Differentiable synthesizers enable automatic differentiation, a crucial step in backpropagation, which is central to training neural networks. By incorporating differentiable operations into synthesizers, sound designers and musicians can leverage the power of machine learning to create and manipulate sounds more efficiently.

Introducing DiffMoog

DiffMoog is a differentiable modular synthesizer developed by researchers from Tel-Aviv University and The Open University, Israel. It was designed specifically for AI-guided sound synthesis and provides an extensive set of modules typically found in commercial instruments.

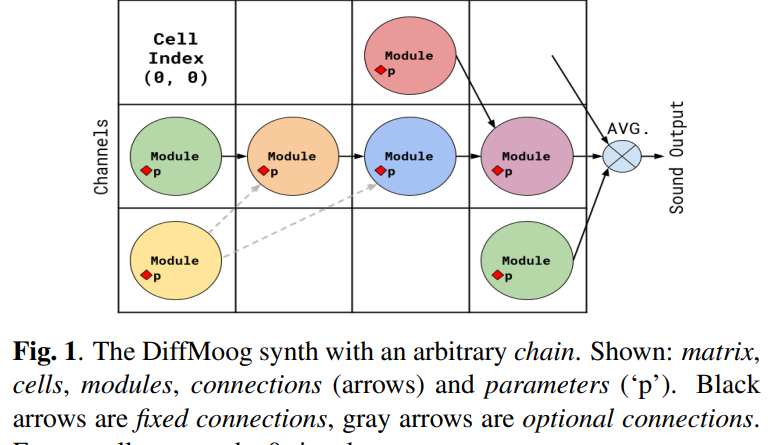

The modular architecture of DiffMoog allows users to create custom signal chains by combining different modules. These modules include modulation capabilities, low-frequency oscillators, filters, and envelope shapers, among others. The comprehensive set of modules ensures that DiffMoog can replicate the functionality of traditional commercial synthesizers.

Integration with Neural Networks

One of the standout features of DiffMoog is its integration with neural networks. This integration allows for automated sound matching by replicating audio inputs. By training neural networks to predict sound parameters, DiffMoog can recreate sounds with impressive accuracy.

The researchers behind DiffMoog have also developed an open-source platform that combines the synthesizer with an end-to-end sound-matching framework. This platform utilizes a signal-chain loss and an encoder network to optimize sound synthesis and achieve high-fidelity reproduction.

Applications and Potential Impact

DiffMoog has significant implications for both the music industry and AI sound synthesis research. Automating sound matching and replication, it reduces the reliance on manual parameter adjustments, enabling musicians to focus more on creativity and experimentation.

Additionally, DiffMoog’s differentiable nature opens up possibilities for integrating it into various machine learning applications. For example, it can be used in sound design for movies and video games, where automated sound matching can save time and effort. It can also be utilized in the field of AI-assisted music composition, where neural networks can generate music based on desired sound characteristics.

Challenges and Future Directions

While DiffMoog represents a significant advancement in the field of sound synthesis, it is not without its challenges. Accurately replicating common sounds remains a major hurdle, as differentiating between similar sounds requires precise frequency estimations.

To address this challenge, the researchers have experimented with different loss configurations and neural architectures. They have found that using the Wasserstein loss, a measure of the distance between probability distributions, can help overcome gradient issues in frequency estimation via spectral loss. Further research is needed to refine audio loss functions, optimization techniques, and alternative neural network structures to achieve greater precision in emulating typical sounds.

Conclusion

DiffMoog, with its differentiable modular synthesizer architecture and comprehensive set of modules, represents a significant advancement in the field of sound synthesis. Its integration with neural networks and AI-guided sound matching capabilities opens up new possibilities for musicians and sound designers. While challenges remain, such as accurately replicating common sounds, the future looks promising for differentiable synthesizers like DiffMoog.

As researchers continue to explore improved audio loss functions, optimization techniques, and alternative neural network structures, we can expect further advancements in sound synthesis and machine learning. DiffMoog and similar synthesizers have the potential to revolutionize the way we create and interact with sound, bringing us closer to new sonic frontiers.

Check out the Paper and Github. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on LinkedIn. Do join our active AI community on Discord.

If you like our work, you will love our Newsletter 📰