Bayesian Optimization with Large Language Models for Natural Language Preference Elicitation

In today’s world, where large language models are becoming increasingly sophisticated, researchers and developers are constantly exploring new ways to leverage these models for various applications. One such application is preference elicitation, where the goal is to understand and elicit a user’s preferences for different items or options. Bayesian optimization, combined with large language models (LLMs), has emerged as a powerful approach for efficient preference elicitation. In this article, we will explore Bayesian optimization for preference elicitation with large language models and understand how it works.

Understanding Preference Elicitation

Preference elicitation refers to the process of understanding and capturing an individual’s preferences for a set of items or options. Traditionally, this process involved direct rating or comparison of items by the user. However, in scenarios where the user is unfamiliar with most of the items, this approach may not be feasible or efficient.

Large language models, such as GPT-3, have the potential to address this challenge. These models can generate human-like text and engage in conversational dialogues, making them suitable for preference elicitation. By having back-and-forth conversations with an LLM, it is possible to intuitively elicit someone’s preferences without relying on prior data about those preferences.

However, there are certain limitations to this approach. Simply prompting an LLM with item descriptions and instructing it to have a preference-eliciting conversation can be computationally expensive. Moreover, monolithic LLMs lack the strategic reasoning to guide the conversation towards exploring the most relevant preferences and avoiding irrelevant tangents. This is where Bayesian optimization comes into play.

Bayesian Optimization for Preference Elicitation

Bayesian optimization is a framework that combines probabilistic modeling and decision theory to optimize functions that are expensive to evaluate. It has been widely used in various domains, such as hyperparameter tuning in machine learning and experimental design in scientific research.

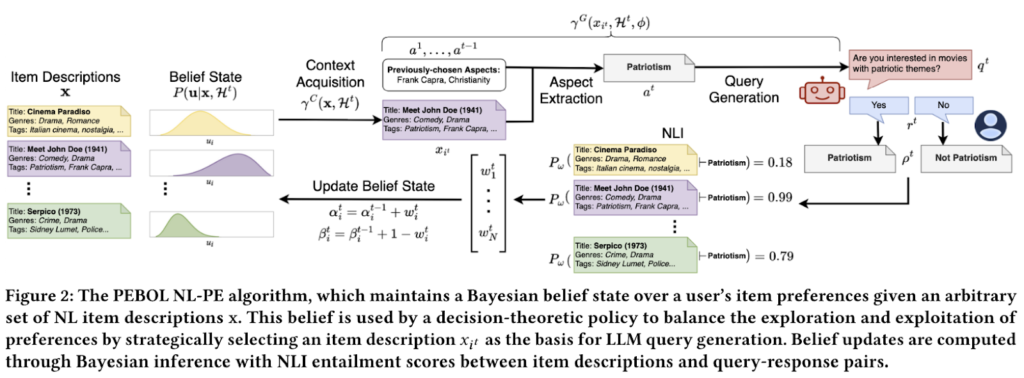

When applied to preference elicitation with large language models, Bayesian optimization augments the language understanding capabilities of LLMs with a principled framework for efficient preference elicitation. One such algorithm that embodies this approach is PEBOL (Preference Elicitation with Bayesian Optimization Augmented LLMs).

How PEBOL Works

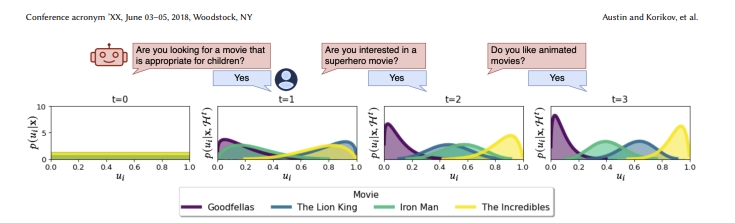

PEBOL operates in a series of conversational turns, where it aims to identify the user’s most preferred items. Here’s a high-level overview of how PEBOL works:

- Modeling User Preferences: PEBOL assumes the existence of a hidden “utility function” that determines the user’s preference for each item based on its description. It uses probability distributions, specifically Beta distributions, to model the uncertainty in these utilities.

- Natural Language Queries: At each conversation turn, PEBOL employs decision-theoretic strategies like Thompson Sampling and Upper Confidence Bound to select one item description. It then prompts the LLM to generate a short, aspect-based query about that item. For example, it might ask, “Are you interested in movies with patriotic themes?”.

- Inferring Preferences via NLI: When the user responds to the query, PEBOL doesn’t take their response at face value. Instead, it uses a Natural Language Inference (NLI) model to predict the likelihood of the user’s response implying a preference for or against each item description.

- Bayesian Belief Updates: Based on the predicted preferences as observations, PEBOL updates its probabilistic beliefs about the user’s utilities for each item. This allows it to systematically explore unfamiliar preferences while leveraging what it has already learned.

- Repeat: The process repeats, with PEBOL generating new queries focused on the items or aspects it is most uncertain about, ultimately aiming to identify the user’s most preferred items.

Benefits of Bayesian Optimization for Preference Elicitation

The key innovation of using Bayesian optimization with large language models for preference elicitation lies in leveraging the language generation capabilities of LLMs while strategically guiding the conversation flow. This approach reduces the amount of context needed for each LLM prompt and provides a principled way to balance the exploration-exploitation trade-off.

By combining the strengths of LLMs and Bayesian optimization, PEBOL offers an intriguing new paradigm for building AI systems that can converse with users in natural language to better understand their preferences and provide personalized recommendations.

Evaluating PEBOL’s Performance

To assess the effectiveness of PEBOL, researchers conducted experiments using simulated preference elicitation dialogues across three datasets: MovieLens25M, Yelp, and Recipe-MPR. They compared PEBOL against a monolithic GPT-3.5 baseline, called MonoLLM, which was prompted with full item descriptions and dialogue history.

The evaluation was based on the Mean Average Precision at 10 (MAP@10) metric over 10 conversational turns with simulated users. Here are the key findings:

- PEBOL achieved significant improvements in MAP@10 compared to MonoLLM. After just 10 turns, PEBOL showed MAP@10 improvements of 131% on Yelp, 88% on MovieLens, and 55% on Recipe-MPR over MonoLLM.

- PEBOL demonstrated incremental belief updates, making it more robust against catastrophic errors. In contrast, MonoLLM exhibited major performance drops, particularly on the Recipe-MPR dataset between turns 4-5.

- PEBOL consistently outperformed MonoLLM under simulated user noise conditions. On Yelp and MovieLens, MonoLLM performed poorly across all noise levels, while on Recipe-MPR, it trailed behind PEBOL’s UCB, Greedy, and Entropy Reduction acquisition policies.

Future Directions and Conclusion

While PEBOL represents a promising first step in preference elicitation with large language models using Bayesian optimization, there are still areas for further exploration and improvement.

Future versions of PEBOL could explore generating contrastive multi-item queries to enhance the understanding of user preferences. Additionally, integrating this preference elicitation approach into broader conversational recommendation systems could open up new possibilities for personalized recommendations.

In conclusion, Bayesian optimization for preference elicitation with large language models offers a novel and efficient approach to understanding and eliciting user preferences. By combining the strengths of LLMs and Bayesian optimization, algorithms like PEBOL pave the way for more sophisticated conversational recommender systems and personalized recommendations. As research in this area progresses, we can expect further advancements and applications in diverse domains.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on LinkedIn. Do join our active AI community on Discord.

Explore 3600+ latest AI tools at AI Toolhouse 🚀.

Read our other blogs on AI Tools 😁

If you like our work, you will love our Newsletter 📰