Meet Verba 1.0: Enhance Your AI with Local RAG and Ollama Integration

Retrieval-augmented generation (RAG) is a cutting-edge technique in artificial intelligence that combines the strengths of retrieval-based approaches with generative models. This integration allows for creating high-quality, contextually relevant responses by leveraging vast datasets. RAG has significantly improved the performance of virtual assistants, chatbots, and information retrieval systems by ensuring that generated responses are accurate and contextually appropriate. The synergy of retrieval and generation enhances the user experience by providing detailed and specific information.

One of the primary challenges in AI is delivering precise and contextually relevant information from extensive datasets. Traditional methods often need help maintaining the necessary context, leading to generic or inaccurate responses. This problem is particularly evident in applications requiring detailed information retrieval and a deep understanding of context. The inability to seamlessly integrate retrieval and generation processes has been a significant barrier to advancing AI applications in various fields.

Current methods in the field include keyword-based search engines and advanced neural network models like BERT and GPT. While these tools have significantly improved information retrieval, they cannot often effectively combine retrieval and generation. Keyword-based search engines can retrieve relevant documents but do not generate new insights. On the other hand, generative models can produce coherent text but may need help to retrieve the most pertinent information.

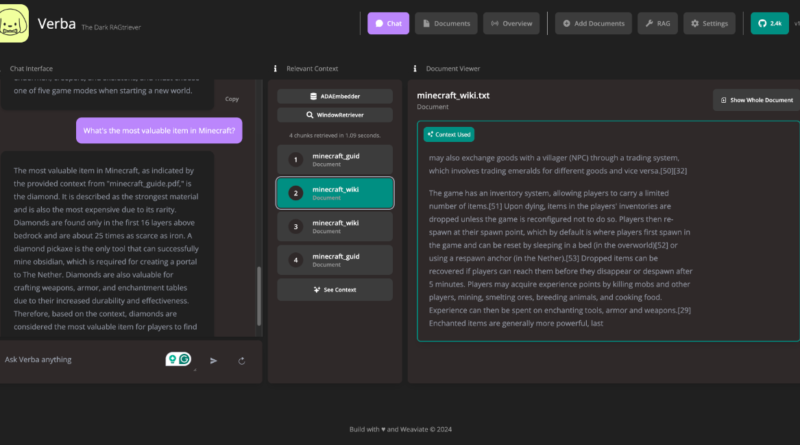

Researchers from Weaviate have introduced Verba 1.0, a solution that can bridge retrieval and generation to enhance the overall effectiveness of AI systems. Verba 1.0 integrates state-of-the-art RAG techniques with a context-aware database. The tool is designed to improve the accuracy and relevance of AI-generated responses by combining advanced retrieval and generative capabilities. This collaboration has resulted in a versatile tool that can handle diverse data formats and provide contextually accurate information.

The Power of Verba 1.0

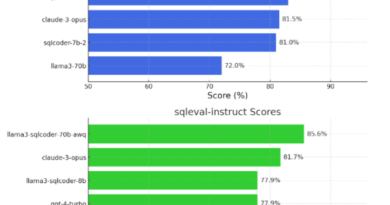

Verba 1.0 employs a variety of models, including Ollama’s Llama3, HuggingFace’s MiniLMEmbedder, Cohere’s Command R+, Google’s Gemini, and OpenAI’s GPT-4. These models support embedding and generation, allowing Verba to process various data types, such as PDFs and CSVs. The tool’s customizable approach enables users to select the most suitable models and techniques for their specific use cases. For instance, Ollama’s Llama3 provides robust local embedding and generation capabilities, while HuggingFace’s MiniLMEmbedder offers efficient local embedding models. Cohere’s Command R+ enhances embedding and generation, and Google’s Gemini and OpenAI’s GPT-4 further expand Verba’s capabilities.

The integration of these models and techniques in Verba 1.0 allows users to run state-of-the-art RAG locally, leveraging the power of open-source models. By running RAG locally, users can benefit from increased privacy and control over their data. Local execution also ensures faster response times and reduces dependency on cloud services.

Enhanced Information Retrieval

Verba 1.0 has demonstrated significant improvements in information retrieval and response generation. Its hybrid search and semantic caching features enable faster and more accurate data retrieval. For example, Verba’s hybrid search combines semantic search with keyword search, saving and retrieving results based on semantic meaning. This approach has enhanced query precision and the ability to handle diverse data formats, making Verba a versatile solution for numerous applications. The tool’s ability to suggest autocompletion and apply filters before performing RAG has further improved its performance.

Notable results from Verba 1.0 include the successful handling of complex queries and the efficient retrieval of relevant information. The tool’s semantic caching and hybrid search capabilities have significantly enhanced performance. Verba’s support for various data formats, including PDFs, CSVs, and unstructured data, has made it a valuable asset for diverse applications. The tool’s performance metrics indicate substantial improvements in query precision and response accuracy, highlighting its potential to transform AI applications.

Open Source and Ollama Integration

Verba 1.0 leverages the power of open-source models to provide users with a flexible and customizable solution. Open-source models like Llama3 and MiniLMEmbedder offer state-of-the-art capabilities and can be easily integrated into Verba’s framework. This integration allows users to benefit from the advancements made by the open-source community and contribute to the development of AI applications.

Furthermore, Verba 1.0 seamlessly integrates with Ollama, a powerful tool for interacting with open-source large language models (LLMs). Ollama provides the capability to run open-source LLMs locally, further enhancing Verba’s local execution capabilities. Running LLMs locally with Ollama offers increased privacy and control over data, as well as improved response times. This integration allows users to harness the power of open-source models while maintaining control over their data.

The Future of RAG and Verba

The introduction of Verba 1.0 represents a significant milestone in the field of retrieval-augmented generation. The integration of state-of-the-art RAG techniques, open-source models, and Ollama’s capabilities allows users to run cutting-edge AI applications locally. This advancement addresses the challenges of precise information retrieval and context-aware response generation, improving the overall effectiveness of AI systems.

As AI continues to advance, the combination of retrieval and generation techniques will play a crucial role in delivering accurate and contextually relevant responses. Verba 1.0 sets the stage for further innovations in the field, enabling researchers and developers to push the boundaries of AI applications. By leveraging the power of open-source models and local execution, Verba paves the way for more accessible and customizable AI solutions.

In conclusion, Verba 1.0 is a groundbreaking tool that enables the local execution of state-of-the-art RAG techniques. By integrating open-source models and leveraging Ollama’s capabilities, Verba provides users with enhanced information retrieval and contextually accurate response generation. This versatile solution has the potential to transform AI applications across various domains, offering improved privacy, control, and performance.

Explore 3600+ latest AI tools at AI Toolhouse 🚀. Don’t forget to follow us on LinkedIn. Do join our active AI community on Discord.

Read our other blogs on AI Tools 😁

If you like our work, you will love our Newsletter 📰