QoQ and QServe: A New Frontier in Model Quantization Transforming Large Language Model Deployment

Quantization, a method integral to computational linguistics, is essential for managing the vast computational demands of deploying large language models (LLMs). It simplifies data, thereby facilitating quicker computations and more efficient model performance. However, deploying LLMs is inherently complex due to their colossal size and the computational intensity required. Effective deployment strategies must balance performance, accuracy, and computational overhead.

The Challenges of Quantization in Large Language Model Deployment

In LLMs, traditional quantization techniques convert high-precision floating-point numbers into lower-precision integers. While this process reduces memory usage and accelerates computation, it often incurs significant computational overhead. This overhead can degrade model accuracy, as the precision reduction can lead to substantial losses in data fidelity.

To overcome these challenges, researchers from MIT, NVIDIA, UMass Amherst, and MIT-IBM Watson AI Lab introduced the Quattuor-Octo-Quattuor (QoQ) algorithm. This novel approach refines quantization by employing progressive group quantization, which mitigates the accuracy losses typically associated with standard quantization methods. By quantizing weights to an intermediate precision and refining them to the target precision, the QoQ algorithm ensures that all computations are adapted to the capabilities of current-generation GPUs.

The QoQ Algorithm: Enhancing Quantization for Large Language Models

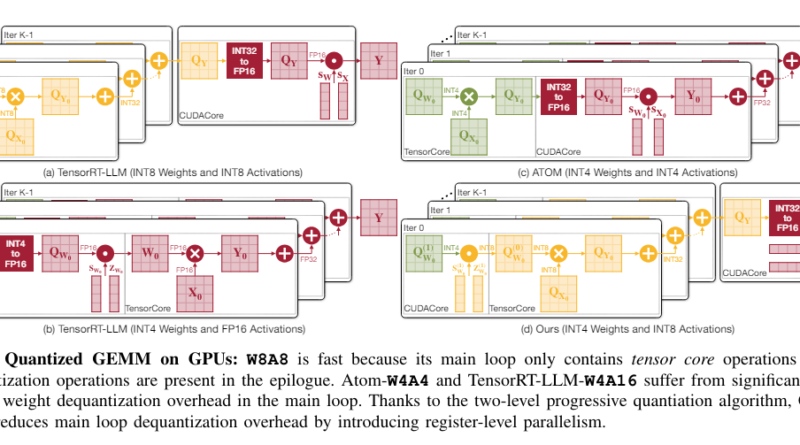

The QoQ algorithm utilizes a two-stage quantization process. Initially, weights are quantized to 8 bits using per-channel FP16 scales; these intermediates are further quantized to 4 bits. This approach enables General Matrix Multiplication (GEMM) operations on INT8 tensor cores, enhancing computational throughput and reducing latency. The algorithm also incorporates SmoothAttention, a technique that adjusts the quantization of activation keys to optimize performance further.

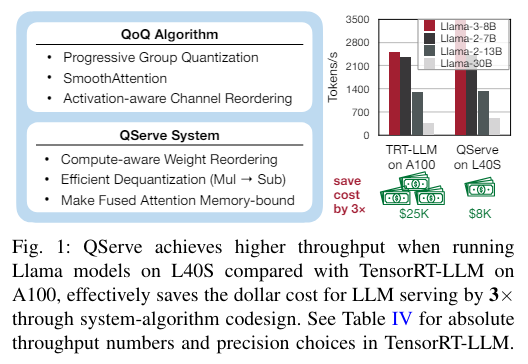

Performance evaluations of the QoQ algorithm indicate substantial improvements over previous methods. In testing, QoQ improved the maximum achievable serving throughput of Llama-3-8B models by up to 1.2 times on NVIDIA A100 GPUs and up to 1.4 times on L40S GPUs. These enhancements in throughput are critical for real-time applications where processing speed is of utmost importance.

Introducing QServe: A System to Support QoQ in Large Language Model Deployment

The QServe system was developed to support the deployment of the QoQ algorithm. QServe provides a tailored runtime environment that maximizes the efficiency of LLMs by exploiting the algorithm’s full potential. It integrates seamlessly with current GPU architectures, facilitating operations on low-throughput CUDA cores and significantly boosting processing speed. This system design reduces the quantization overhead by focusing on compute-aware weight reordering and fused attention mechanisms, essential for maintaining throughput and minimizing latency.

Performance evaluations of the QServe system demonstrate its effectiveness in enhancing LLM serving throughput. On the L40S platform, QServe achieved throughput enhancements of up to 3.5 times compared to the same model on A100 GPUs. These impressive results highlight the potential of QServe in reducing the cost of LLM serving and making large language models more accessible for a wide range of applications.

The Impact of QoQ and QServe in Large Language Model Deployment

The advancements brought by the QoQ algorithm and QServe system in the field of large language model deployment are significant. By addressing the significant computational overhead and accuracy loss inherent in traditional quantization methods, QoQ and QServe markedly enhance LLM serving throughput. The results from the implementation demonstrate up to 2.4 times faster processing on advanced GPUs, substantially reducing both the computational demands and the economic costs associated with LLM deployment.

The benefits of QoQ and QServe extend beyond improved performance and cost savings. These advancements pave the way for broader adoption and more effective use of large language models in real-world applications. With faster processing speeds and improved efficiency, LLMs can be deployed in various domains, including natural language processing, machine translation, speech recognition, and more.

In conclusion, the QoQ algorithm and QServe system represent a new frontier in model quantization, transforming large language model deployment. By combining innovative quantization techniques with a tailored runtime environment, these advancements significantly enhance LLM serving throughput, reduce costs, and open up new possibilities for the application of large language models. As the field continues to evolve, we can expect further advancements in model quantization and deployment strategies, leading to even more efficient and accessible large language models.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on LinkedIn. Do join our active AI community on Discord.

Explore 3600+ latest AI tools at AI Toolhouse 🚀.

If you like our work, you will love our Newsletter 📰