Smaug-Llama-3-70B-Instruct: The Future of Open-Source Conversational AI Unveiled

Artificial intelligence (AI) has revolutionized various fields by introducing advanced models for natural language processing (NLP). NLP enables computers to understand, interpret, and respond to human language in a valuable way. This field encompasses text generation, translation, and sentiment analysis applications, significantly impacting industries like healthcare, finance, and customer service. The evolution of NLP models has driven these advancements, continually pushing the boundaries of what AI can achieve in understanding and generating human language.

Despite these advancements, developing models that can effectively handle complex multi-turn conversations remains a persistent challenge. Existing models often fail to maintain context and coherence over long interactions, leading to suboptimal performance in real-world applications. Maintaining a coherent conversation over multiple turns is crucial for applications like customer service bots, virtual assistants, and interactive learning platforms.

The Need for Improved Conversational AI Models

Current methods for improving AI conversation models include fine-tuning diverse datasets and integrating reinforcement learning techniques. Popular models like GPT-4-Turbo and Claude-3-Opus have set benchmarks in performance, yet they still need to improve in handling intricate dialogues and maintaining consistency. These models often rely on large-scale datasets and complex algorithms to enhance their conversational abilities. However, maintaining context over long conversations remains a significant hurdle despite these efforts. While impressive, the performance of these models indicates the potential for further improvement in handling dynamic and contextually rich interactions.

Introducing Smaug-Llama-3-70B-Instruct: A New Benchmark in Open-Source Conversational AI

Researchers from Abacus.AI have introduced the Smaug-Llama-3-70B-Instruct model, which is very interesting and claimed to be one of the best open-source models rivaling GPT-4 Turbo. This new model aims to enhance performance in multi-turn conversations by leveraging a novel training recipe. Abacus.AI’s approach focuses on improving the model’s ability to understand and generate contextually relevant responses, surpassing previous models in the same category.

Smaug-Llama-3-70B-Instruct builds on the Meta-Llama-3-70B-Instruct foundation, incorporating advancements that enable it to outperform its predecessors. The research team at Abacus.AI has developed this model using a new Smaug recipe for improving performance on real-world multi-turn conversations applied to Meta-Llama-3-70B-Instruct. By fine-tuning and integrating new datasets, the model achieves superior performance in understanding and generating contextually appropriate responses.

The Advanced Techniques Behind Smaug-Llama-3-70B-Instruct

The Smaug-Llama-3-70B-Instruct model uses advanced techniques to achieve its superior performance. Researchers employed a specific training protocol emphasizing real-world conversational data, ensuring the model can handle diverse and complex interactions. By leveraging popular frameworks like transformers, the model can be deployed for various text-generation tasks, making it highly versatile.

Transformers enable efficient processing of large datasets, contributing to the model’s ability to understand and develop detailed and nuanced conversational responses. This integration of transformers allows Smaug-Llama-3-70B-Instruct to generate accurate and contextually appropriate responses, enhancing its conversational abilities.

Benchmarking Performance of Smaug-Llama-3-70B-Instruct

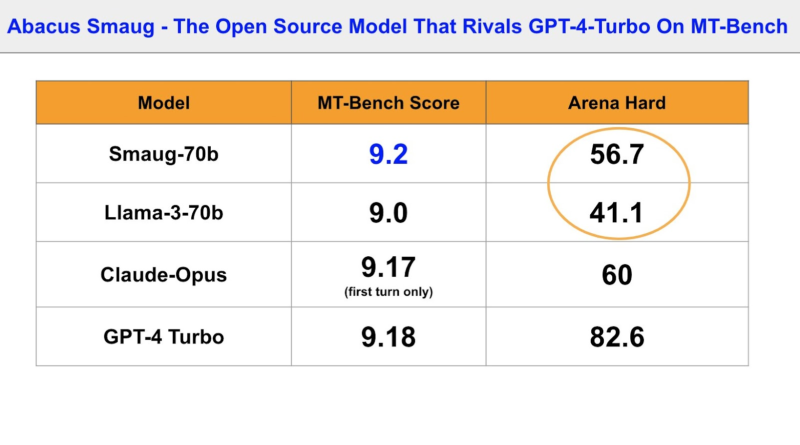

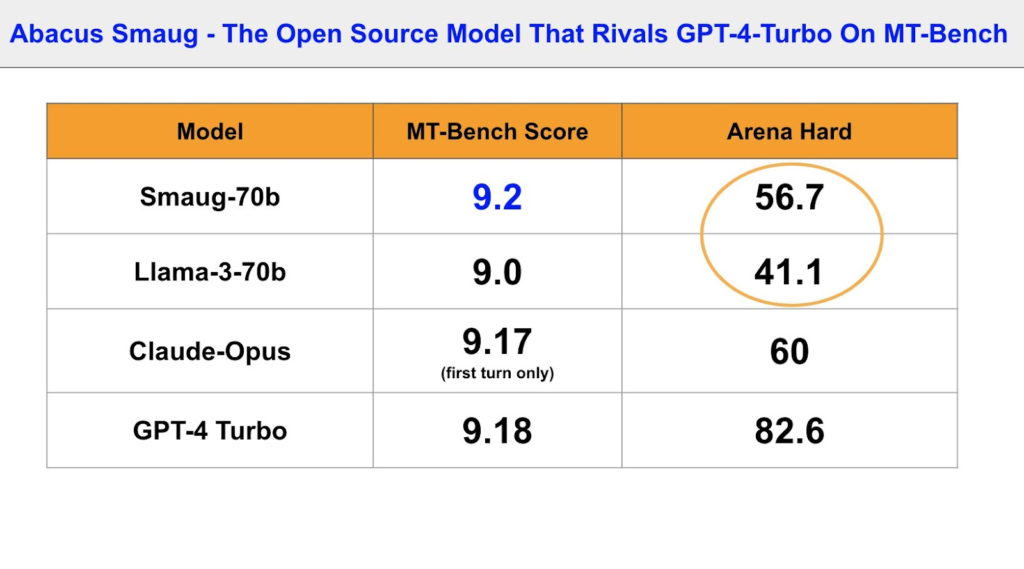

The performance of the Smaug-Llama-3-70B-Instruct model is demonstrated through various benchmarks. The MT-Bench scores showcase the model’s ability to maintain context and deliver coherent responses over extended dialogues. The model scored 9.4 in the first turn, 9.0 in the second turn, and an average of 9.2, outperforming Llama-3 70B and GPT-4 Turbo, which scored 9.2 and 9.18, respectively.

However, real-world tasks require complex reasoning and planning, which MT-Bench does not fully address. Arena Hard, a new benchmark measuring an LLM’s ability to solve complex tasks, showed significant gains for Smaug over Llama-3. Smaug scored 56.7 compared to Llama-3’s 41.1, indicating its advanced understanding and processing of multi-turn interactions.

Conclusion

In conclusion, Abacus.AI’s release of the Smaug-Llama-3-70B-Instruct model represents a significant advancement in the field of open-source conversational AI. The model’s performance benchmarks and superior scores highlight its potential to transform applications requiring advanced conversational AI. By addressing the challenges of maintaining context and coherence over long interactions, Smaug-Llama-3-70B-Instruct sets a new standard for future developments in the field. This model represents a promising advancement, paving the way for more sophisticated and reliable AI-driven communication tools.

As AI continues to evolve, models like Smaug-Llama-3-70B-Instruct open up exciting possibilities for improved human-machine interactions. The ability to understand and generate contextually relevant responses in multi-turn conversations is crucial for various applications, including customer service, virtual assistants, and interactive learning platforms. With ongoing advancements in NLP models, the future of conversational AI looks promising, enabling more natural and meaningful interactions between humans and machines.

Don’t forget to follow us on LinkedIn. Do join our active AI community on Discord.

Explore 3600+ latest AI tools at AI Toolhouse 🚀.

If you like our work, you will love our Newsletter 📰