Aloe Impact on Healthcare Technology

In today’s digital era, the use of advanced technologies like large language models (LLMs) has become increasingly indispensable in various domains, including healthcare. These LLMs have the potential to analyze vast amounts of medical information, providing valuable insights that were once solely reliant on human expertise. The recent breakthrough in healthcare technology comes in the form of Aloe: a family of fine-tuned open healthcare LLMs that achieves state-of-the-art results through model merging and prompting strategies. Let’s delve into the details of Aloe and its significance in revolutionizing healthcare.

The Need for Competitive Open Healthcare LLMs

In the field of medical technology, the availability of open-source models that can rival proprietary systems is crucial. These open healthcare LLMs not only foster transparency but also ensure accessibility and innovation in healthcare technology advancements. However, there has been a pressing challenge in developing competitive open models that can match the performance of proprietary solutions.

Traditionally, healthcare LLMs are improved through pre-training on domain-specific datasets and fine-tuning for specific tasks. However, these techniques often encounter scalability issues when it comes to handling larger models and complex medical data. Thus, the development of advanced strategies to enhance the performance of open healthcare LLMs has become imperative.

Introducing Aloe: Fine-tuned Open Healthcare LLMs

To address the limitations of traditional healthcare LLMs, researchers from the Barcelona Supercomputing Center (BSC) and Universitat Politècnica de Catalunya – Barcelona Tech (UPC) have introduced the Aloe models. These models employ innovative techniques such as model merging and instruct tuning, leveraging the strengths of existing models and enhancing them through sophisticated training regimens.

Model Merging: Enhancing Model Performance

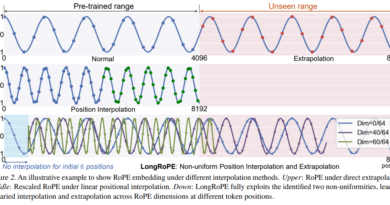

Model merging is a pivotal strategy employed by Aloe to boost the performance of healthcare LLMs. By merging multiple models, Aloe creates a cohesive and comprehensive knowledge base that surpasses the capabilities of individual models. This merging process involves integrating the best features from existing models, resulting in an enhanced and more accurate system for medical data processing.

Instruct Tuning: Improving Model Efficiency

Another key technique utilized by Aloe is instruct tuning. This approach involves fine-tuning the models based on specific instructions or prompts. By providing explicit instructions, Aloe can optimize the models’ performance for a wide range of medical inquiries, resulting in improved accuracy and reliability. Instruct tuning ensures that the models can effectively handle complex medical data and generate accurate responses.

Advanced Training Strategies for Aloe Models

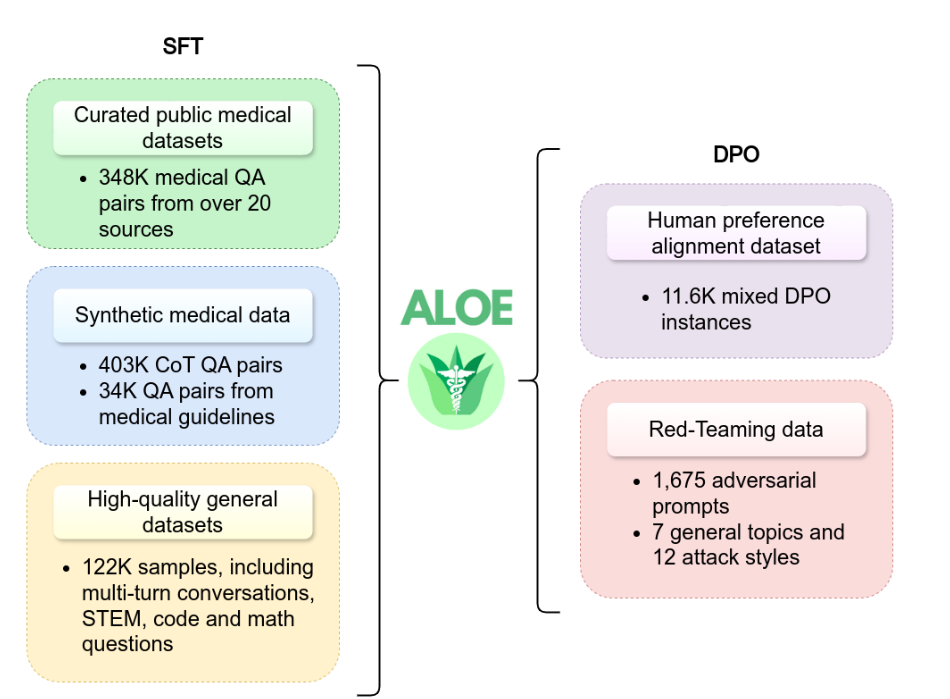

The Aloe models incorporate advanced training strategies to achieve state-of-the-art results. They are trained using a dataset that includes a combination of public data sources and synthetic data generated through advanced Chain of Thought (CoT) techniques. This diverse training data enables the models to learn from a wide range of medical contexts and enhances their ability to provide accurate and contextually appropriate responses.

Additionally, the Aloe models undergo a rigorous red teaming process to assess potential risks and ensure their safety in real-world deployment. This process involves subjecting the models to simulated attack scenarios and evaluating their robustness against various adversarial techniques. By conducting red teaming exercises, the researchers can identify and address vulnerabilities, making the Aloe models more secure and reliable.

Achieving State-of-the-Art Results and Ethical Alignment

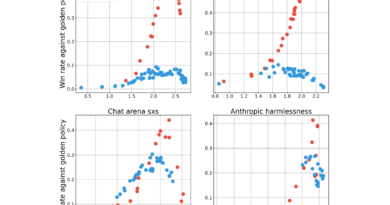

The performance of the Aloe models has set new benchmarks in the healthcare domain. In evaluations against medical question-answering benchmarks like MedQA and PubmedQA, the Aloe models have shown significant improvements in accuracy compared to previous open models. These improvements, often exceeding 7%, highlight the superior capability of Aloe in handling complex medical inquiries.

Furthermore, the Aloe models prioritize ethical alignment. They undergo an alignment phase with Direct Preference Optimization (DPO), which ensures that their outputs adhere to ethical standards and minimize bias. The models are extensively tested against various bias and toxicity metrics to eliminate any potential ethical concerns. This commitment to ethical alignment sets Aloe apart from its predecessors and emphasizes the responsible use of LLMs in healthcare applications.

The Impact of Aloe on Healthcare Technology

The introduction of Aloe represents a significant breakthrough in the field of healthcare technology. By merging cutting-edge technologies and ethical considerations, Aloe enhances the accuracy and reliability of medical data processing. The models’ state-of-the-art performance and ethical alignment ensure that advancements in healthcare technology are accessible and beneficial to all.

With Aloe, the democratization of sophisticated medical knowledge becomes a reality. These fine-tuned open healthcare LLMs pave the way for improved decision-making tools in the healthcare industry, enabling healthcare professionals and researchers to make informed and accurate judgments. The accessibility and transparency offered by open healthcare LLMs like Aloe drive innovation and ultimately contribute to the advancement of global healthcare.

In conclusion, Aloe: a family of fine-tuned open healthcare LLMs, revolutionizes the healthcare landscape by achieving state-of-the-art results through model merging and prompting strategies. With their enhanced performance, ethical alignment, and accessibility, Aloe models offer a promising future for healthcare technology, empowering medical professionals and improving patient outcomes.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on LinkedIn. Do join our active AI community on Discord.

Explore 3600+ latest AI tools at AI Toolhouse 🚀.

If you like our work, you will love our Newsletter 📰