Meet Phind-70B: An Artificial Intelligence (AI) Model that Closes Execution Speed and the Code Generation Quality Gap with GPT-4 Turbo

The field of Artificial Intelligence (AI) is rapidly advancing, and its impact can be seen across various industries. In recent years, Large Language Models (LLMs) based on Natural Language Processing, Understanding, and Generation have proven to be game-changers in the world of AI. These models have shown exceptional potential in a wide range of applications, from language translation to content generation.

One area where LLMs have made a significant impact is in coding. Developers often face challenges in writing efficient and error-free code. With the introduction of AI-assisted coding, developers can now rely on the expertise of LLMs to improve their coding experiences. These AI models can generate code snippets, provide instant feedback, and even suggest solutions to complex coding problems.

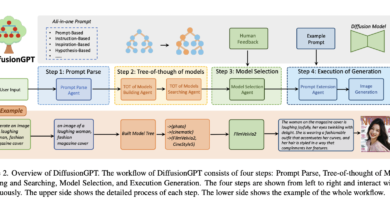

Introducing Phind-70B, an AI model specifically designed to close the execution speed and code generation quality gap with GPT-4 Turbo. Developed by a team of researchers, Phind-70B builds upon the CodeLlama-70B model, incorporating additional refinements and 50 billion extra tokens. This model aims to enhance the coding experience for developers by offering faster execution speed and better quality code generation.

One of the key advantages of Phind-70B is its impressive execution speed. The model can generate answers on technical topics at an unparalleled pace of up to 80 tokens per second. This means that developers can get instant feedback and code suggestions, allowing them to work more efficiently and effectively.

In addition to its speed, Phind-70B also excels in code generation quality. With a 32K token context window, the model can generate complex code sequences and understand deeper contexts. This capability enables Phind-70B to provide thorough and relevant coding solutions, making it a valuable tool for developers.

When it comes to performance measures, Phind-70B has shown impressive results. In the HumanEval benchmark, Phind-70B outperformed GPT-4 Turbo, achieving a score of 82.3% compared to 81.1% for GPT-4 Turbo. While there may be a slight loss behind GPT-4 Turbo in the Meta’s CRUXEval dataset with a score of 59% compared to 62%, it’s important to note that these benchmarks may not accurately reflect the model’s effectiveness in practical applications. In real-world workloads, Phind-70B demonstrates exceptional code generation skills and a willingness to produce thorough code samples without reluctance.

Phind-70B’s remarkable performance is attributed to its speed, which is four times faster than GPT-4 Turbo. The model leverages the TensorRT-LLM library from NVIDIA on the newest H100 GPUs, which significantly enhances efficiency and improves inference performance. This optimization allows Phind-70B to deliver fast and accurate results, making it a valuable asset for developers.

To ensure widespread access to Phind-70B, the development team has partnered with cloud partners SF Compute and AWS. This partnership ensures the best infrastructure for training and deploying the model. In addition, Phind-70B offers a free trial without requiring a login, allowing developers to experience its capabilities firsthand. For those seeking even more features and limits, a Phind Pro subscription is available, providing a comprehensive coding aid experience.

The Phind-70B development team has also expressed their commitment to collaboration and creativity. They plan to make the weights for the Phind-34B model public soon and eventually publish the weights of the Phind-70B model as well. This open approach encourages knowledge sharing and fosters a culture of cooperation within the AI community.

In conclusion, Phind-70B is a remarkable AI model that closes the execution speed and code generation quality gap with GPT-4 Turbo. Its exceptional speed and code quality make it a valuable tool for developers looking to enhance their coding experiences. By leveraging the power of LLMs, Phind-70B promises to revolutionize AI-assisted coding and contribute to the advancement of the AI field as a whole.

Don’t forget to follow us on LinkedIn. Do join our active AI community on Discord.