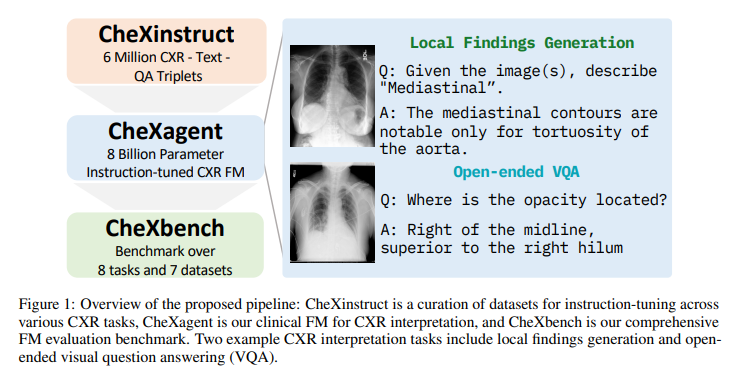

Introducing CheXagent: Revolutionizing Chest X-ray Analysis and Summarization

Artificial Intelligence (AI) has revolutionized various fields, including machine translation, natural language understanding, and computer vision. In the realm of medical imaging, AI has made significant strides in the interpretation of chest X-rays (CXR), a crucial diagnostic tool. Chest X-rays hold immense clinical significance, and the development of vision-language foundation models (FMs) has opened new avenues for automated CXR analysis, potentially revolutionizing clinical decision-making and improving patient outcomes.

The Challenge in CXR Interpretation

The development of effective FMs for CXR interpretation poses several challenges. Firstly, there is a limited availability of large-scale vision-language datasets, which hampers training and evaluation. Additionally, medical data is complex, and the nuanced interplay between visual elements and their corresponding medical interpretations is challenging to capture. Traditional methods often fall short in accurately interpreting medical images like CXRs.

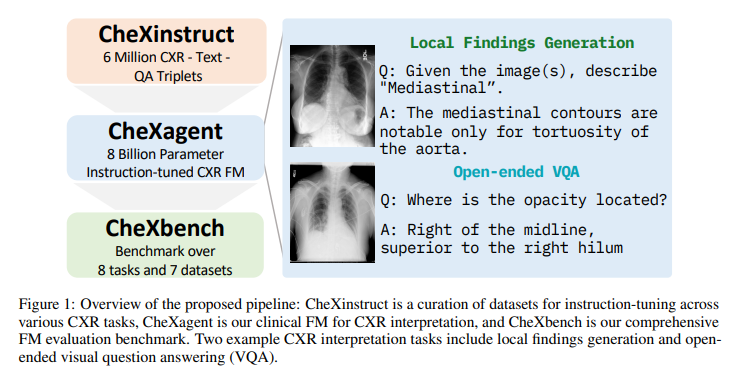

Introducing CheXagent and CheXinstruct

To address these challenges, researchers from Stanford University and Stability AI have introduced CheXinstruct [1]. It is a comprehensive instruction-tuning dataset curated from 28 publicly available datasets. The aim of CheXinstruct is to enhance the ability of FMs to accurately interpret CXRs. Alongside CheXinstruct, the researchers have developed CheXagent, an instruction-tuned FM for CXR interpretation, equipped with an impressive 8 billion parameters [1].

CheXagent is a culmination of a clinical large language model (LLM), capable of understanding radiology reports, a vision encoder for representing CXR images, and a bridging network to integrate the vision and language modalities. This integration enables CheXagent to analyze and summarize CXRs effectively [1].

Evaluating CheXagent with CheXbench

To evaluate the effectiveness of CheXagent, the researchers have introduced CheXbench [1]. CheXbench provides a systematic way to compare FMs across eight clinically relevant CXR interpretation tasks. It assesses the models’ capabilities in image perception and textual understanding, providing a comprehensive evaluation framework.

CheXagent’s performance in these tasks has been exceptional, surpassing general- and medical-domain FMs [1]. The model demonstrates advanced proficiency in understanding and interpreting medical images, excelling in tasks such as view classification, binary disease classification, single and multi-disease identification, and visual question answering. In terms of textual understanding, CheXagent generates medically accurate reports and effectively summarizes the findings, as validated by expert radiologists [1].

Ensuring Fairness and Transparency

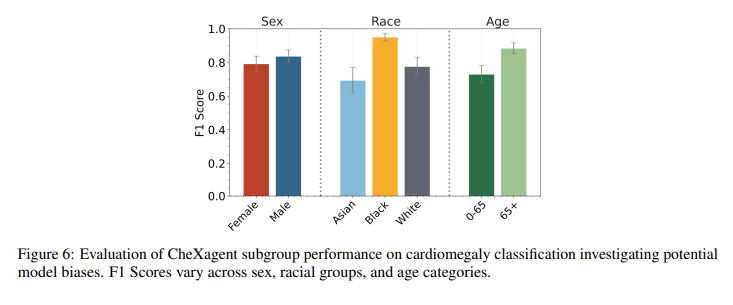

In addition to evaluating performance, CheXbench includes a fairness assessment to identify potential performance disparities across sex, race, and age groups [1]. This commitment to fairness and transparency ensures that the model’s outputs align with human radiologist standards.

While CheXagent has shown remarkable performance, it is important to note that there is always room for improvement. Ongoing research and development are necessary to refine AI tools like CheXagent, ensuring their equitable and effective use in healthcare.

The Milestone and Future Possibilities

The introduction of CheXagent marks a significant milestone in medical AI and CXR interpretation. The combination of CheXinstruct, CheXagent, and CheXbench represents a holistic approach to improving and evaluating AI in medical imaging [1]. These tools have the potential to enhance clinical decision-making and ultimately improve patient outcomes.

The release of CheXagent and related tools also sets a new benchmark for future research in this vital area. The ongoing advancements in AI and the refinement of models like CheXagent will continue to shape the field of medical imaging and contribute to the advancement of healthcare.

Conclusion

AI, particularly through deep learning, has propelled the field of medical imaging forward. With the introduction of CheXagent and CheXinstruct, the interpretation of chest X-rays has reached new heights. The combination of vision-language models, comprehensive datasets, and evaluation frameworks has paved the way for automated CXR analysis and summarization.

The exceptional performance of CheXagent in various CXR interpretation tasks demonstrates its potential to revolutionize clinical decision-making. However, continued research and development are necessary to refine the model and ensure fairness and transparency in its outputs.

The release of CheXagent and related tools showcases a commitment to advancing medical AI and sets a benchmark for future research. As AI continues to evolve, we can expect further advancements in CXR interpretation, ultimately leading to improved healthcare outcomes for patients.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on LinkedIn. Do join our active AI community on Discord.

If you like our work, you will love our Newsletter 📰