Researchers from Google AI and Tel-Aviv University Introduce PALP: A Revolutionary Method for Personalized Text-to-Image Models

In recent years, text-to-image generation has seen significant advancements, thanks to the efforts of researchers from Google AI and Tel-Aviv University. One of their groundbreaking contributions is the introduction of PALP (Prompt-Aligned Personalization), a novel method that allows for better prompt alignment of text-to-image models. This innovative approach addresses the challenge of generating personalized images from text while maintaining prompt fidelity and alignment. In this article, we will explore the significance of PALP and its potential applications in various fields.

The Challenge of Text-to-Image Personalization

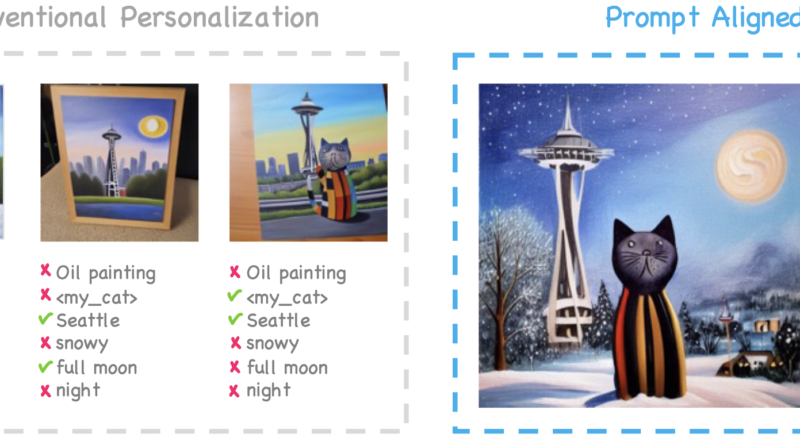

Generating personalized images from text poses a unique set of challenges. A successful text-to-image model must capture specific elements such as location, style, and ambiance, while faithfully aligning with the user’s prompt. Existing methods often compromise either personalization or prompt alignment, leading to suboptimal results.

The primary challenge lies in balancing identity preservation and prompt alignment during personalized image generation. Traditional text-to-image models struggle to control and align with specific prompts, often requiring extensive prompt engineering and re-sampling. This limitation hinders the fulfillment of user prompts and compromises subject fidelity.

🔥Explore 3500+ AI Tools and 2000+ GPTs at AI Toolhouse

Introducing PALP: Prompt-Aligned Personalization

PALP aims to overcome the limitations of existing methods by introducing a novel approach to personalized text-to-image conversion. The method focuses on enhancing text alignment, which allows for more efficient alignment of the generated scene with the user’s prompt.

To ensure prompt alignment without overfitting the model, PALP leverages pre-trained models’ knowledge. It fine-tunes a pre-trained model on a small set of images representing the target subject, updating specific network weights and optimizing a new word embedding for the subject. This process helps align the generated images more closely with the desired prompt.

Additionally, PALP employs Score Distillation Sampling (SDS) techniques to guide the model’s prediction towards the target prompt. By distilling the scores obtained from multiple model predictions, the method improves the alignment of the generated images with complex prompts.

The Advantages of PALP

PALP offers several advantages over existing text-to-image generation methods. By focusing on prompt alignment, PALP reduces the need for extensive prompt engineering and re-sampling. This makes the process more efficient and less time-consuming.

Furthermore, PALP’s ability to generate images that align with complex and intricate prompts sets it apart from traditional methods. The method excels in improving text alignment, enabling the creation of images that capture the nuances and details specified in the prompt.

Evaluating PALP

To assess the effectiveness of PALP, researchers compared its performance with existing state-of-the-art methods such as P+, NeTI, and TI+DB. The evaluation involved both quantitative and qualitative assessments.

Quantitatively, PALP demonstrated superior text alignment while maintaining high image alignment. The method outperformed other approaches in terms of prompt fidelity and subject preservation. These results indicate the potential for improved personalized image generation using PALP.

Qualitatively, PALP was evaluated using ten different complex prompts, each having at least four diverse elements. The generated images exhibited remarkable alignment with the prompts, showcasing the method’s ability to handle intricate instructions and produce visually coherent results.

Applications of PALP

PALP’s innovative approach to personalized text-to-image generation opens up a wide range of applications across various fields. Content creation stands to benefit significantly from PALP, as it allows for the generation of images tailored to specific prompts, styles, and atmospheres. This can enhance the visual appeal and engagement of content across platforms.

PALP also holds promise in on-demand image generation. The ability to accurately align with complex prompts makes it ideal for platforms requiring customized visual content, such as social media, advertising, and e-commerce. PALP can help streamline the image creation process by automatically generating personalized visuals that align with user preferences.

Moreover, PALP’s advancements in prompt alignment can be leveraged in fields such as virtual and augmented reality, where the generation of immersive and personalized environments is paramount. The method’s ability to align with specific prompts and capture intricate details can enhance the realism and user experience of virtual worlds.

Conclusion

PALP, the novel personalization method introduced by researchers from Google AI and Tel-Aviv University, is revolutionizing text-to-image generation. By prioritizing prompt alignment and leveraging pre-trained models’ knowledge, PALP offers enhanced personalization capabilities without compromising prompt fidelity. The method’s effectiveness in aligning with complex prompts opens up exciting possibilities for content creation, on-demand image generation, and immersive experiences. As PALP continues to evolve, we can expect further advancements in personalized text-to-image models, empowering users with the ability to transform their ideas into visually stunning realities.

Check out the Paper and Project Page. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on LinkedIn. Do join our active AI community on Discord.

If you like our work, you will love our Newsletter 📰